Hardware is fast, but most do not architect software to take advantage of these hardware advances. At worse, running software on significantly better hardware crashes your app because of latent bugs.

Software does not run on category theory, it runs on real hardware. Today, it runs on a superscalar CPUs with multi-channel GB/s memory units and disk access in 100’s of microseconds scale for NVMe SSDs. Network cards scale up to 100Gbps, and recently chalcogenides (metalloid alloy), give us 1 microsecond latencies for durable writes with 3dXpoint.

Hardware is fast, but most do not architect software to take advantage of these hardware advances. At worse, running software on significantly better hardware crashes your app because of latent bugs. This is what ‘feels’ slow…. because, it is. To be more precise, the latency (time to do X) improvements in hardware are simply wasted in an era of no free-lunch.

To understand the money you are leaving behind, run a fio sequential read/write test with libaio on an xfs filesystem on an x86 64bit linux machine with a recent kernel. Now download any Apache queue and test on the same environment. The result is anywhere from 10-100x performance loss. Obviously, there is an expected slowdown with anything more complex than saving and reading random bytes sequentially. This is, however, a lens into the gap in performance that we have created with software.

On my laptop, a simple dd if=/dev/zero of=~/tmp.out writes data at 1.1GB/sec. Roughly the limit of my Samsung NVMe SSD. The best open source queues can do - today - is ~300MB/sec on the same hardware. Locks, cache contention, in-kernel structures, garbage collection among many are the culprit. The gap is worse if you are trying to read data, at least while you are writing, modern drives and write-buffers have got your back.

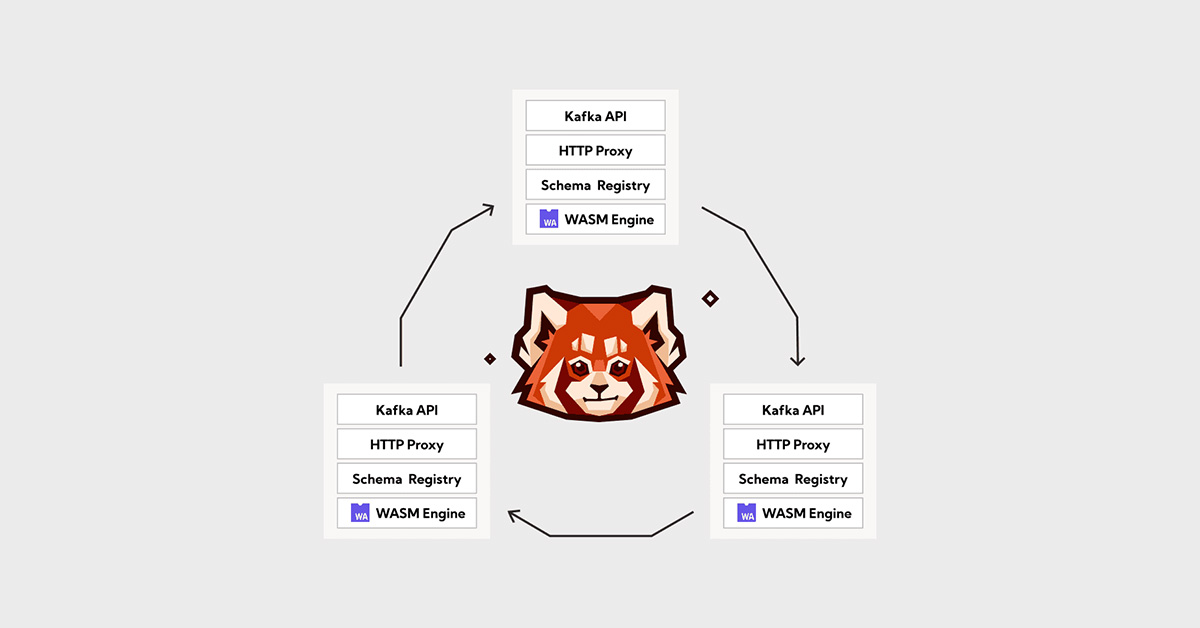

When we set out to build Redpanda, one could not simply reason intelligently about trading off latency for throughput or viceversa with any existing queue. Step-function degradation per producer/consumer, randomly moving saturation points based on the phase of the moon meant that running a system at scale necessitates massive overprovisioning.

The reality of software is that hardware is the platform, with known constraints and physical limits. The reason for the massive overprovisioning is because we have ignored how the hardware works, the tradeoffs implicitly built into the platform, the speeds at which data is accessed, transformed and shipped. At scale, understanding the hardware means 3-10x reduction of cost by simply running software that maps to the real world, to real hardware, not just category theory.

Let's keep in touch

Subscribe and never miss another blog post, announcement, or community event. We hate spam and will never sell your contact information.